Academy Series #1: Give Users Back Control of Their Data

At Fixmeapp we believe that building something meaningful requires more than just code, it requires curiosity, critical thinking and willingness to ask disruptive questions from the status quo. That’s why we have been collaborating with students from KTH, Royal Institute of Technology and Aalto University on researach that directly shapes how we build Fixmeapp.

This is the first post in our Academy Series where we present thesis work done in collaboration with us, share what students found, and explore what it means for the future of Fixmeapp. First up, Mateusz Kaszyk and Morgan Bjälvenäs from KTH and their research: Design and Prototyping of a User-centered Consent Flow.

What they found

The research starts from a uncomfortable truth: most consent mechanisms on the internet today don’t actually give users meaningful control or insight. Cookie banners, long privacy policies, pre-selected checkboxes which are often designed to get compliance, not to give you genuine choice. Mateusz and Morgan wanted to explore what a consent flow could look like when it's designed the other way around; starting from the user, not from legal compliance or business value. Let's be honest: platforms don't collect your data to protect you. They collect it because data is worth money.

They built a working prototype and tested it with 15 participants. The results were encouraging. 97% rated the design as free from manipulative patterns, the highest score across all categories. Users also rated usability, user control, and privacy by default highly (all at 87%).

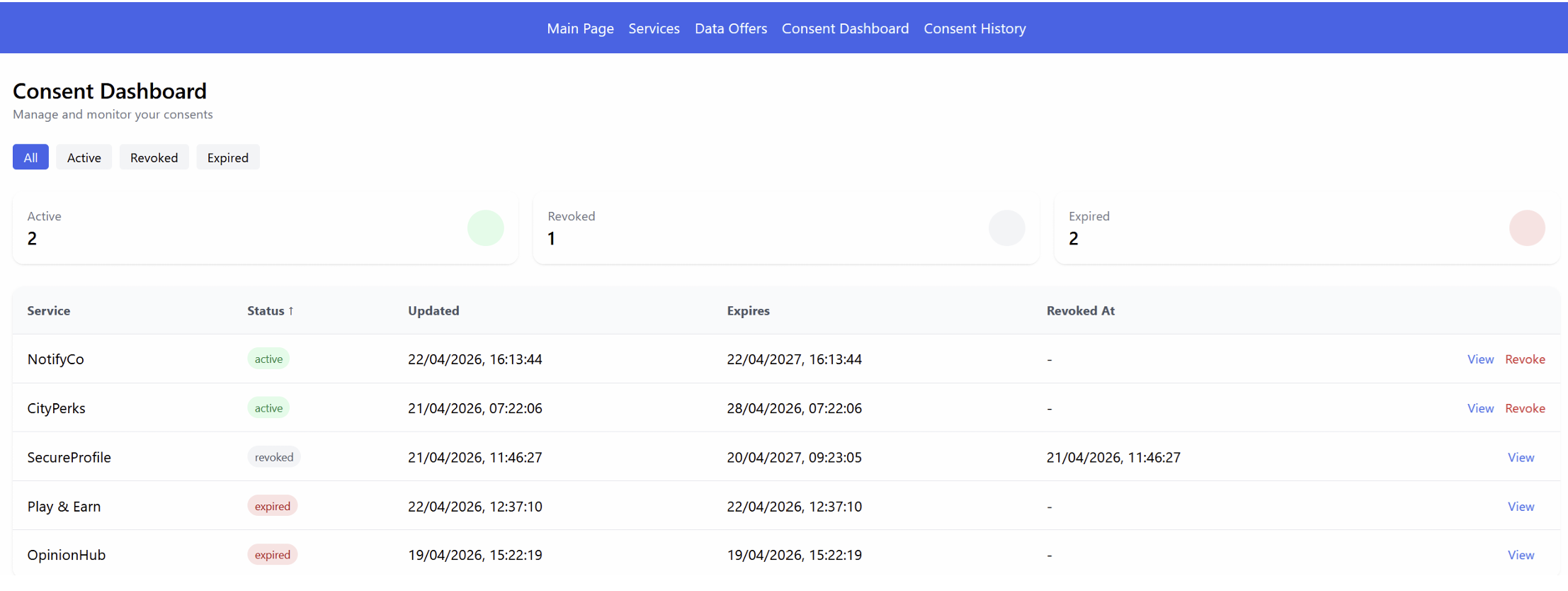

The consent dashboard; a central place where you can see all your active consents, modify them, or withdraw them entirely, was the most praised feature. People genuinely valued having one clear place to stay in control.

The consent dashboard

Where it gets interesting

The biggest challenge they identified was scalability. As the number of data categories, third parties, and purposes grows, consent interfaces risk becoming overwhelming. Their scalability score was 53% (the lowest in the study) and qualitative feedback confirmed it: users felt the interface got heavy when too much information appeared at once.

As Mateusz and Morgan put it: "The trade-off between transparency and usability emerged as a central tension — providing comprehensive information about data processing tends to increase cognitive burden, yet withholding information undermines the transparency needed for genuine informed consent."

This is a real tension in building honest products. More transparency means more information. More information risks overwhelming people. The research concluded that good design alone isn't enough — the underlying system also needs to minimize what data it collects in the first place.

Less data, fewer decisions, clearer consent.

Why this matters for us

At Fixmeapp, we’re building a platform built on trust where service providers and users connect. Data flows through that connection — bookings, preferences, communication. We take that responsibility seriously, and having researchers dig into how consent should actually work — grounded in GDPR, Privacy by Design, and real user testing, gives us a stronger foundation to build on.

We’re grateful to Mateusz and Morgan for choosing to do this work with us.

Their prototype and findings will directly inform how we continue to develop the privacy layer of Fixmeapp.

If you’re curious, their full paper is available on request.

Stay tuned to read about more great research made with KTH and Aalto university in collaboration with Fixmeapp.

/ Team Fixmeapp